The Telltale Sign of a Buffering Problem

Some chatbots on WordPress sites respond word-by-word, streaming answers in real time. Others dump the entire response at once. This difference isn’t always the AI model or connection speed—it’s often a server configuration issue between nginx and your application backend.

If you use a reverse proxy setup (nginx in front of Apache or PHP-FPM), your chatbot responses likely appear all at once. This happens because nginx defaults to HTTP/1.0 for these upstream connections, and HTTP/1.0 lacks support for the chunked transfer encoding required for streaming.

How HTTP Versions Control Streaming

HTTP/1.0, finalized in 1996, was built for simple document retrieval. A client made a request, the server sent the complete document, and the connection closed. This model is inefficient for dynamic content where the final size is unknown.

HTTP/1.1, introduced in 1997, solved this with chunked transfer encoding. This allows a server to send data in a series of pieces (“chunks”) without needing to know the total content size beforehand. This is the mechanism that enables streaming: the server sends the first part of a chatbot’s response, then the next, and so on. The browser displays each chunk as it arrives.

For a chatbot, an HTTP/1.0 connection forces the server to generate the entire AI response, buffer it, and only then send it to the browser. With HTTP/1.1, the response streams as it’s generated, creating the real-time effect users expect.

The Bottleneck: Nginx’s Upstream Default

In many hosting environments, nginx acts as a reverse proxy, receiving requests from the internet and forwarding them to an application server like Apache or PHP-FPM. For these upstream connections, nginx defaults to the older, more compatible HTTP/1.0 protocol.

This default works for most web traffic, like returning a complete HTML page or a JSON object. But for a streaming AI chatbot, it creates an invisible bottleneck. Nginx waits for the upstream server to finish generating the full response, buffers it, and then sends it to the user in one block.

This behavior is common on servers managed with tools like ServerPilot, RunCloud, and GridPane, as well as many custom VPS configurations. If your server runs both nginx and Apache (you can check with ps aux | grep -E "nginx|httpd"), you likely have this setup.

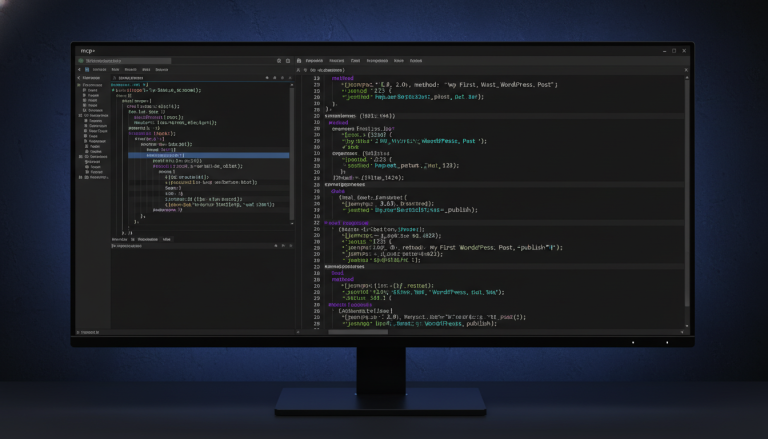

The Fix: Force HTTP/1.1 and Disable Buffering

The solution is to instruct nginx to use HTTP/1.1 for your chatbot’s API endpoint and to disable buffering for that specific connection. This allows nginx to act as a transparent pipe, passing data chunks to the browser as soon as they are received.

First, locate your site’s nginx configuration file. This is often in /etc/nginx/sites-enabled/ or /etc/nginx/conf.d/.

Inside your server block, add a new location block specifically for the chatbot endpoint. This block should be placed before any generic PHP processing rules.

location ~ /wp-json/pressbot/v1/(agent/)?chat {

proxy_pass http://your_backend;

proxy_http_version 1.1;

proxy_buffering off;

proxy_request_buffering off;

}You must replace http://your_backend with the address of your actual upstream backend. Look for an existing proxy_pass directive in your configuration file to find the correct address, which might be an IP like http://127.0.0.1:8080 or a socket like http://unix:/var/run/php/php8.2-fpm.sock.

Here’s what these directives do:

- proxy_http_version 1.1; — Forces nginx to use the HTTP/1.1 protocol for the upstream connection.

- proxy_buffering off; — Prevents nginx from buffering the response from the backend server.

- proxy_request_buffering off; — Prevents nginx from buffering the user’s incoming request before sending it to the backend.

After saving the file, test your nginx configuration for syntax errors:

sudo nginx -tIf the test is successful, reload nginx to apply the changes:

sudo systemctl reload nginxConfirming the Stream is Live

Open your WordPress site and test the chatbot. The response should now appear word-by-word. This immediate visual change confirms that chunked data is flowing correctly from the backend server, through nginx, and to your browser without being buffered.

When This Fix Isn’t Necessary

This configuration is only needed for reverse proxy setups where nginx forwards requests to another backend. If your server uses a simpler stack, such as nginx with PHP-FPM directly or Apache on its own, you likely do not need this fix. These configurations typically handle HTTP/1.1 and chunked encoding correctly by default.

Next step: If you run a reverse proxy and want to add a streaming AI chatbot to your site, check out PressBot. It supports streaming out-of-the-box and provides conversational tools to manage your WordPress site.