Anthropic CEO Dario Amodei told the Pentagon no. Not a soft no — not a “let’s revisit the terms” — but a firm, public rejection of the Department of Defense’s final ultimatum demanding unrestricted access to Claude, Anthropic’s flagship AI model.

The stakes were real. A $200 million contract. Threats to designate Anthropic as a “supply chain risk.” Even the possibility of invoking the Defense Production Act to compel compliance. Amodei walked away from all of it, arguing that the proposed contract language offered “virtually no progress” on preventing mass surveillance of American citizens or the development of fully autonomous weapons systems.

What the Pentagon Wanted

The DoD’s position was straightforward: once they purchase technology, they should dictate how it’s used for “all lawful purposes.” On the surface, that sounds reasonable. In practice, the contract language Anthropic was presented with would have removed the guardrails that prevent Claude from being used for domestic mass surveillance and autonomous lethal decision-making.

The Pentagon has stated it has no interest in illegal surveillance or autonomous weapons. Amodei’s response was that intent and contract language are different things. If the legal framework permits it, a future administration — or even a different office within the current one — could direct the technology toward those uses without any contractual barrier.

This isn’t hypothetical hand-wringing. Amodei made a specific argument: current AI systems are not reliable enough for autonomous lethal decisions, and using AI for mass domestic surveillance is fundamentally incompatible with democratic values. He drew a line and held it.

Why This Isn’t Just a Headlines Story

AI companies face pressure from governments, enterprise clients, and investors to maximize deployment. The default incentive is to say yes — to sign the contract, collect the revenue, and worry about the edge cases later. What makes Anthropic’s position notable is that they absorbed a direct financial and political cost to maintain their safety principles.

Anthropic was founded specifically around the idea that AI development needs built-in safety constraints — what they call Constitutional AI. Every Claude model ships with protections against misuse baked into its architecture, not bolted on as an afterthought. The Pentagon dispute is the most visible test of whether those principles hold under real pressure. So far, they have.

This matters beyond the military context. When an AI provider demonstrates that it will protect user privacy even when a $200 million contract is on the table, that tells you something about how it handles the millions of smaller, quieter decisions that shape how your data is processed every day.

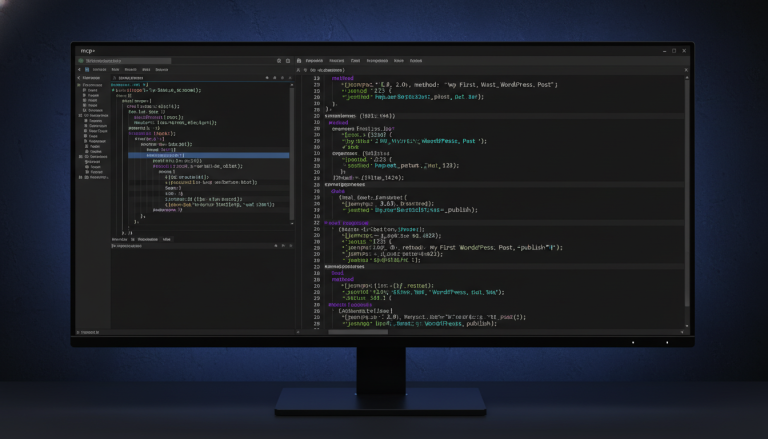

What This Means for PressBot

PressBot is built on Anthropic’s Claude models. We recommend Claude for the admin agent specifically because it delivers the strongest reasoning and most accurate tool calling of any model we’ve tested. But performance is only part of the equation.

When a PressBot user connects their Claude API key and starts managing their WordPress site through natural language — creating posts, running security audits, moderating comments, managing WooCommerce orders — every one of those interactions passes through Anthropic’s infrastructure. The question of how that provider treats data, what principles guide their decisions, and whether they’ll hold those principles under pressure isn’t abstract. It’s the foundation your site management runs on.

Here’s a concrete example of why this matters. Say you use PressBot’s admin agent to run a security audit on your WordPress site. The agent checks file permissions, SSL configuration, debug mode, admin usernames, and a dozen other vectors — then reports findings with severity ratings. That interaction contains specific details about your site’s security posture. You need to trust that the AI provider processing that request treats your data with the same seriousness they’d bring to a government negotiation. Anthropic has now demonstrated, under the most public pressure imaginable, that they do.

We also support Google Gemini — it’s a strong option, especially for the public chatbot where its free tier makes it accessible. But for the admin agent, where accuracy, reasoning depth, and trust matter most, Claude is our recommendation. Amodei’s decision reinforces that.

Principles Under Pressure

Every AI company publishes responsible use policies. Most of them have never been tested against a threat from the Department of Defense. Anthropic’s have. Dario Amodei chose the privacy of American citizens and the ethical boundaries of AI over a nine-figure contract and the political goodwill of the most powerful military on earth.

We’re proud to build on that foundation. PressBot uses a BYOK (Bring Your Own Key) model — your API key, your provider account, your control over costs and data. When we recommend Anthropic Claude as the best choice for your WordPress AI agent, we’re recommending it on performance and on principle.

If you want to see what Claude can do for your WordPress site, get PressBot at pressbot.io and connect your Anthropic API key. The admin agent, 65 tools, Telegram integration, and the full AI-powered workflow are available in Pro — backed by a provider that just proved its commitments aren’t for sale.